By 2035, will AI and related technologies change what it means to be human for the better, or worse?

Good afternoon from Washington, where spring is in full swing and the imposition of historic tariffs is poised to further disrupt the economy of the United States and those of our trading partners around the world.

For this American, "Liberation Day" will continue to refer to "Victory in Europe Day," Emancipation Day, or Juneteenth. Those historic days recognize when our union collectively achieved far greater victories against fascism abroad or chattel slavery at home.

Our young democracy remains flawed by design, but celebrating them reminds us all of who we have been and what our union could still become: a thriving, multiracial, pluralistic liberal democracy based upon a Constitution under which equal justice under law are not just a broken promise graven upon the Supreme Court.

Many thanks to everyone who has subscribed to Civic Texts since I last wrote, Welcome to new subscribers! You can all always reach me at alex@governing.digital, 410-849-9808, or directly on Signal using a non-government computing device if you need to ensure our correspondence remains free from surveillance.

How will AI have changed what it means to be human in 2035?

A new report (PDF) from the Imagining the Digital Future Center at Elon University features insights from a global survey of technology experts about what being human will mean in 2025. Your faithful correspondent was one of 301 folks included, which I hope will not overly detract from the insights therein.

The canvassing is a non-scientific survey, but it's a fascinating collection of insights. You can download the full report, read about the research methodology or browse hundreds of other essays online in Part I, Part II, Part III and Part IV.

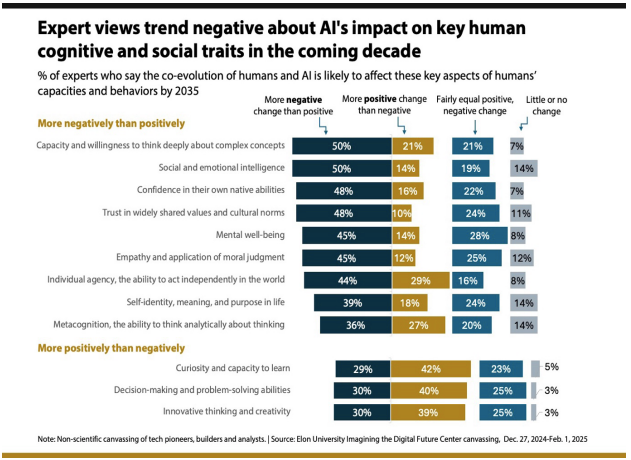

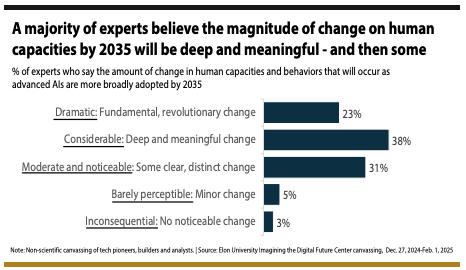

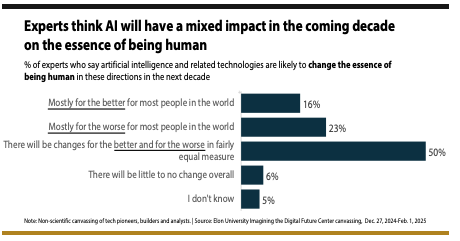

A majority of those folks surveyed expect that "the likely magnitude of change in humans’ native capacities and behaviors" as we adapt to artificial intelligence (AI) over the next ten years "will be 'deep and meaningful'. Some of us predict fundamental or revolutionary shifts in what it means to be human, at least for those of us lucky or cursed to be connected in 2025.

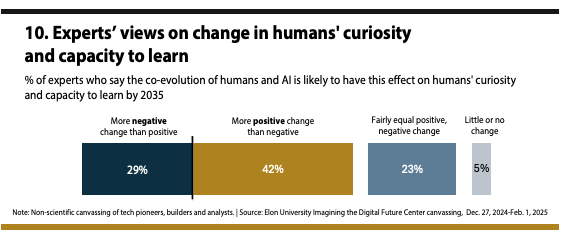

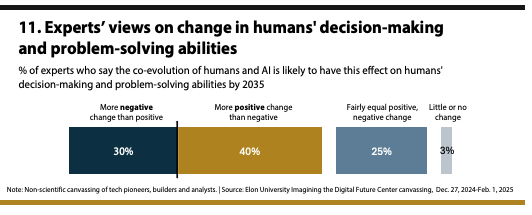

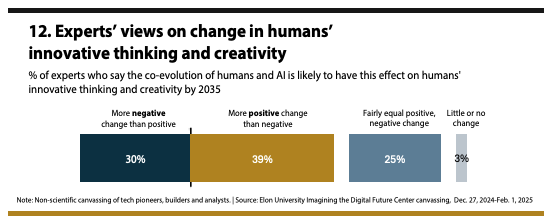

Majorities of us expect a negative impact for many of the areas surveyed, though the positive potential for AI to augment human creativity, learning, and generativity is a notable exception. My own essay follows.

As with Internet connectivity, smartphones, social media, and the "metaverse," we should expect to see generative artificial intelligence adopted and adapted unequally across humanity, with differing impact in each cultural and societal context.

Nations that have strong data protection laws, healthy institutions, constitutions that center human rights and civil liberties, and fundamentally open, democratic systems will have the best chance at mitigating the worst impacts of automation, algorithmic regulation, and successive generations of more capable agents.

We should expect to see positive applications of AI in education, the sciences, entertainment, manufacturing, medicine, and governance, based upon the early signals we see in 2025.

If nations and states can turn the global tide of authoritarianism back towards democracy, we will see billions of humans use AI to augment how we work, learn, play, and share. Human nature itself will not fundamentally change, but the nature of being human will be influenced by this shift.

Nations with closed, authoritarian systems of governance will be far more challenged to respond to the impact of AI. If states do not enact data protection laws, insist upon open standards, and enact guardrails for how, when, and where AI is used, then we will see AI used for coercion, control, and repression of dissent.

The early returns from automation suggest that as technology becomes more advanced, abstracted away from our direct control, human understanding of the machines, systems, and process that govern our lives diminished, along with agency to change them.

Much thus depends upon legislatures not only increasing their own capacity for oversight of AI, but developing insight and foresight about how and where it is adopted and for what purpose.

The experience of being human will not fundamentally shift in the next decade, but our understanding of what humans are good at doing versus more intelligent agents will.

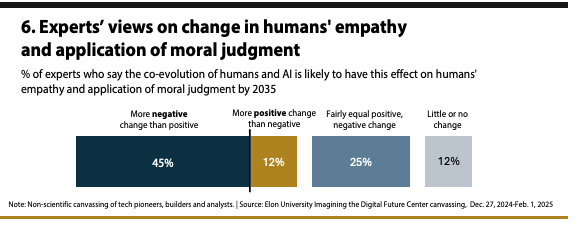

While we will see computational capacity to discern trends in noisy data that surpass those of humans, our ability to create great art, offer empathy or compassion, or to emotionally connect with animals and one another will continue to distinguish us from the machines we create, despite advances in simulacra.

We are likely to see the emergence of agents that provide services, education, and diagnoses to people who cannot no longer afford to be taught or seen by a human, which will risk depriving generations of the benefit of mentors and doctors.

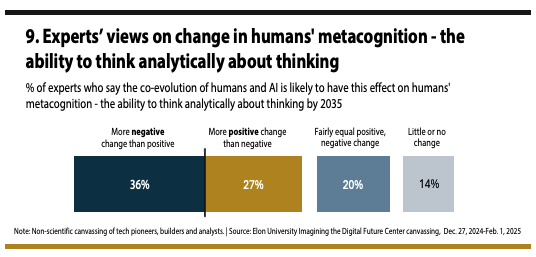

The higher order consciousness that has distinguished humans from most other living beings will continue to define our humanity, but our sense of self will be challenged by personalized agents that eerily predict our interests, needs, desires, or flaws.

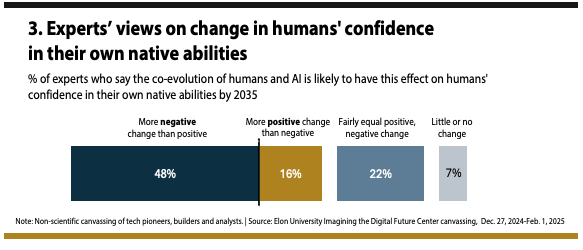

We will see the emergence of a delta between students and professionals who overly depend upon AI and people who retain the capacity for computation and critical thinking.

This will become an acute risk for societies should connectivity be broken by increasingly extreme natural disasters or Internet shutdowns, much in the same way that disruption to the global positioning system proportionately impacts generations who have never had to navigate the world without smartphones and dashboard computers.

The disappearing capacity to "drive stick" in a car or take over manual control of an aircraft on autopilot will have parallels across society, if AI leads to more abstraction across industries and professions.

Invisible algorithmic "barbed wire" could prevent clerks, administrators, teachers, and nurses from applying intuition to help people caught in technical systems, or from even understanding what happened.

The augmentation of human intellect, capacity, and experience that we see today through increasingly ubiquitous access to information over the Internet might also shift if the services people depend upon are degraded by synthetic data, AI-generated slop, and biased data sets.

If knowledge is power, then that future must be avoided at all costs.